|

The default is domain and subdomain level. The crawling restrictions are automatically generated for App URL constraints. Even though the robots.txt file tells search engine crawlers not to request the listed pages or files, this only helps avoid overloading the site with requests, but does not prevent attacks. Security Tip: Robots.txt doesn't prevent crawlingĪttackers won't respect a robots.txt file when targeting your site, so why train your scanner to ignore part of your attack surface? It's a common misconception that having robots.txt stops scanners and search engines from crawling sites. Crawl URLsįor broader scan coverage, enable crawling of URLs in robots.txt and any sitemaps found on your site. The Stay on port setting forces the scan to crawl only URLs which are defined as using the same ports as those specified in the seed URLs. css (an autoload resource needed to populate the web page in a browser), maybe requested even after the max links limit has been reached. The crawling process occurs until the total number of URLs discovered reaches the Max links to crawl setting. The additional URLs are then recursively used for the crawling process again. The scan engine works in 2 phases: crawling and attacking.ĭuring the crawling phase, the seed URLs (a combination of app-level URLs and scan config URLs) are used as starting points for an algorithm that requests the content at each URL and then interrogates the response for additional URLs. You can limit the number of URLs to crawl. In the Crawling Restrictions subsection, you can restrict what will be crawled in scans run on this config. You can choose a protocol (HTTP or HTTPS), subdomain (such as Crawling restrictions In the Scan Config URLs listing, you can add more seed URLs that only apply to this config. Any scans on an app will use the app level URLs as seed URLs. Seed URLs dictate what URLs are used as the base for a scan. The inheritance relates to the seed URLs used when scanning. These are inherited into all scan configs created under that app. The URLs specified for the entire app appear in the App URLs listing. In the Scan URLs subsection, you can add URLs that you want to scan with this config including those already specified in the app itself. Set the following options to control the app scan. This will go over how to set the scan scope to decide how your app is scanned. You can set the app level URLs within the app or set the scan config URLs from within the scan config. It is listed on the site under the ‘Deliberately Vulnerable’ -> ‘Web Apps’ navbar option, and it is called (drumroll….) ‘Juice Shop’.You can use scan scope to decide which URLs are attacked or crawled. For the purposes of the rest of this series the specific section of attackdefense that we will use is the OWASP Juice Shop Vulnerable Web Application (see pun from previous paragraph). At the time I’m writing this, the site is free to use, and all you need is a Google account. The best and easiest way to get started with practicing web hacking (that I have found) is to use. All of this is just the beginning of what will eventually lead to a mastery of Burp Suite, so now is a good time to be excited. (You’ll understand that pun in a second). Maybe even squeeze some “Juice” out of it. After that, we will take a look at scoping our testing and using Burp’s built in crawl and scan tools to try and find some low hanging fruit.

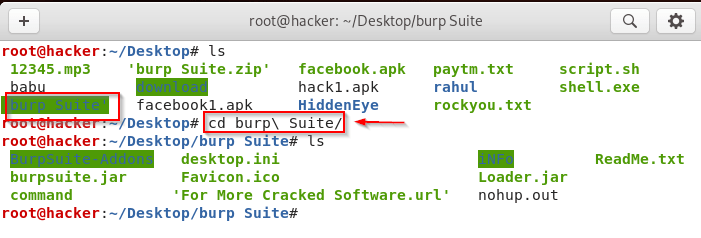

To start, we need to set up a good practice target. This part in the series is going to go over some basic uses of Burp to get you on the road to your first finding. Thankfully, there are many apps to test with online that are free to use in a safe environment. But where do we start? Well, I don’t recommend hitting the open information highway and testing whatever you see.

Now it’s time to hit the web and hack some apps. So you made it through Part 1? Congrats on your success – that was the most boring part.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed